Welcome to the final installment of this three-part blog series, where we will go over the results presented in Flare of our new Similar Actor Model. As a reminder, in part one, we discussed why we believe that using artificial intelligence for clustering malicious actors could help organizations in monitoring the dark web more effectively, and part two covered the methods employed to reach our objective; the how if you will.

Integration to Flare

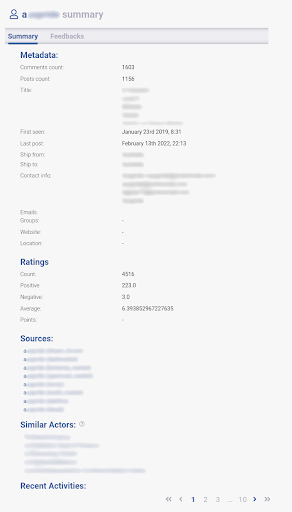

In order to integrate the Similar Actors Model to our Digital Risk Protection (DRP) platform, Flare, we have added a new section to the current “actor profile” interface. This is where all currently known information about said actor is displayed, including, when applicable, their profile on all sources we collect. From there, if any actors are identified as similar to the one presently selected, they will be displayed under the Similar Actors section and can be interacted with to browse their profiles.

Figure I – Example of an Actor’s Profile page

Examples of Similar Actors

Now for the exciting parts, the actual results! We have identified a few actors that our Similar Actor Model flagged as similar, in various categories. As previously mentioned in part 2 of this blog series, we know our model identified words such as “hack” and “crack” or “Fullz” and “Carding” to be considered similar, which we know to be true. Let’s see how that translates into actual posts from actors on dark web discussion forums.

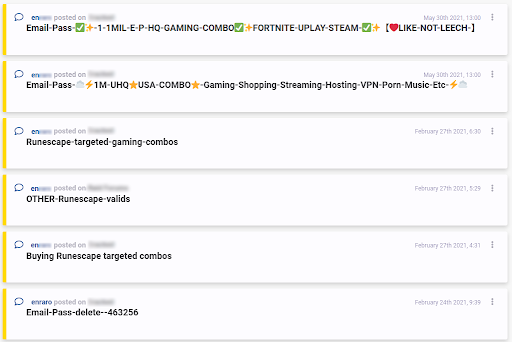

In this first example, we can see two different actors sharing what are known as “combolists”, which are essentially documents with thousands of combinations of various email addresses and passwords, typically used for credential stuffing. These actors not only frequent different dark web forums, but also were active at different points in time, yet our Similar Actor Model identified them as being similar from the content of their posts.

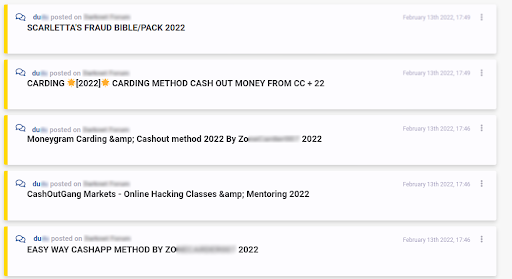

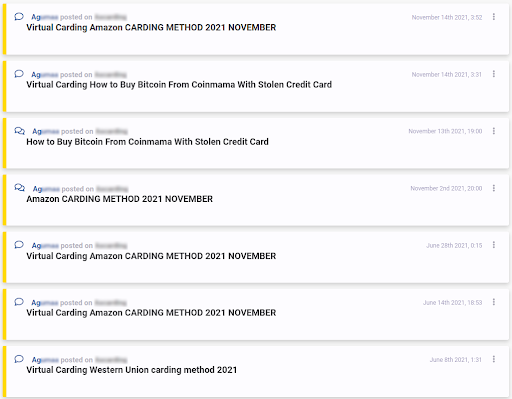

The following example shows two actors sharing various fraud methods on dark web forums, mostly related to carding – a type of fraud that exploits stolen credit cards.

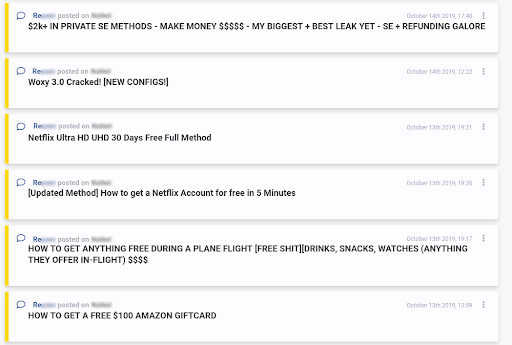

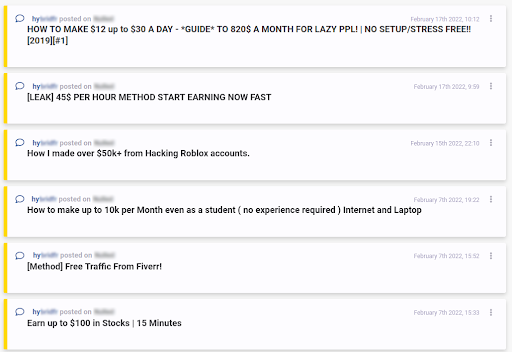

Lastly, below are examples of fraudsters sharing money making methods. Once again, worth noting that these two actors were active at different points in time, but were identified as similar nonetheless. One could make an argument that time periods should be taken into consideration when comparing actors, however, some investigations span over long periods of time, and focusing on the content of the messages published by malicious actors online was the top priority when designing this new feature.

The previous snippets of Flare constitute good examples of what our Similar Actor Model can do; we believe it will serve as a powerful investigative tool for Flare users. To try it out yourself, why not head over to our website and book a demo!

Where Do We Go From Here

At the moment, the new Similar Actor Model is still in a sort of beta-phase and is publicly available for Flare users, however, it is limited to actors with a high number of forum discussion topics contributed. We are still very pleased with the results we are seeing so far and plan on expanding the scope of the project to cover vendors / dark web market sellers, as well as regular users that interact with forum topics through forum posts, and not necessarily creating new discussion topics.

In conclusion, the completion of this project marks yet another great Artificial Intelligence integration into Flare, providing users with more intelligence when it comes to threat actors, and additional tools for investigation purposes. As always, we have plenty of other exciting features coming soon to Flare; subscribe to our newsletter to stay in the know about everything Digital Risk Protection related!